In digital life, the next click has never been entirely accidental. Search engines, feeds, storefronts, and streaming apps have long tried to predict what will hold attention for one more moment. What is changing now is the speed, confidence, and reach of those predictions. AI systems can absorb behavior across larger datasets, infer intent from shorter signals, and reshape what appears most relevant before a person has fully decided what to do next.

That shift could alter habits across far more than entertainment or shopping. It could influence which sources get noticed, which creators get discovered, which products feel credible, and which decisions are made without much browsing at all. These 15 developments show how AI could steadily change what people click on next, and why that change may feel both convenient and unsettling at the same time.

Search Could Deliver Answers Before Links

One of the clearest changes is happening in search itself. Instead of presenting a page filled mainly with blue links, AI-powered search increasingly puts summaries, extracted facts, and follow-up suggestions at the top of the screen. That changes the role of a click. In many cases, the click stops being the first step in finding an answer and becomes an optional second step taken only when the summary feels incomplete. For publishers, retailers, and independent websites, that seemingly small design shift can matter enormously because visibility no longer guarantees a visit.

The behavior is already measurable. Pew Research Center found that Google users who encountered an AI summary clicked on traditional search links less often than users who did not see one. Bain has also reported growing reliance on zero-click behavior, where people get enough information directly on the results page to avoid visiting another site. Over time, AI search may train users to treat outside links less like destinations and more like supporting evidence, clicked only when deeper trust, detail, or comparison is needed.

Feeds Could Learn Faster Than Follows Ever Did

Traditional social media once leaned heavily on explicit signals such as who a person followed or which pages they liked. AI-driven feeds work differently. They can infer interests from pauses, rewatches, scroll speed, dwell time, shares, device context, and countless other tiny behaviors that feel too minor to matter on their own. The result is a feed that can adapt even when a person never actively says what they want. Clicking becomes less about choosing from a social graph and more about responding to a machine’s evolving guess.

That change can make platforms feel eerily intuitive. A person may watch one niche clip, pause on another, and suddenly find an entire feed rebuilt around a mood, hobby, or identity fragment. Meta has publicly tied major infrastructure investments to ranking and recommendation models, while YouTube continues to explain recommendations as a system designed to surface the most relevant content at a given moment. As these systems become more sensitive, the next click may increasingly come from what the platform inferred in silence rather than from anything a user deliberately selected.

“Next” Could Become a Conversation, Not a Menu

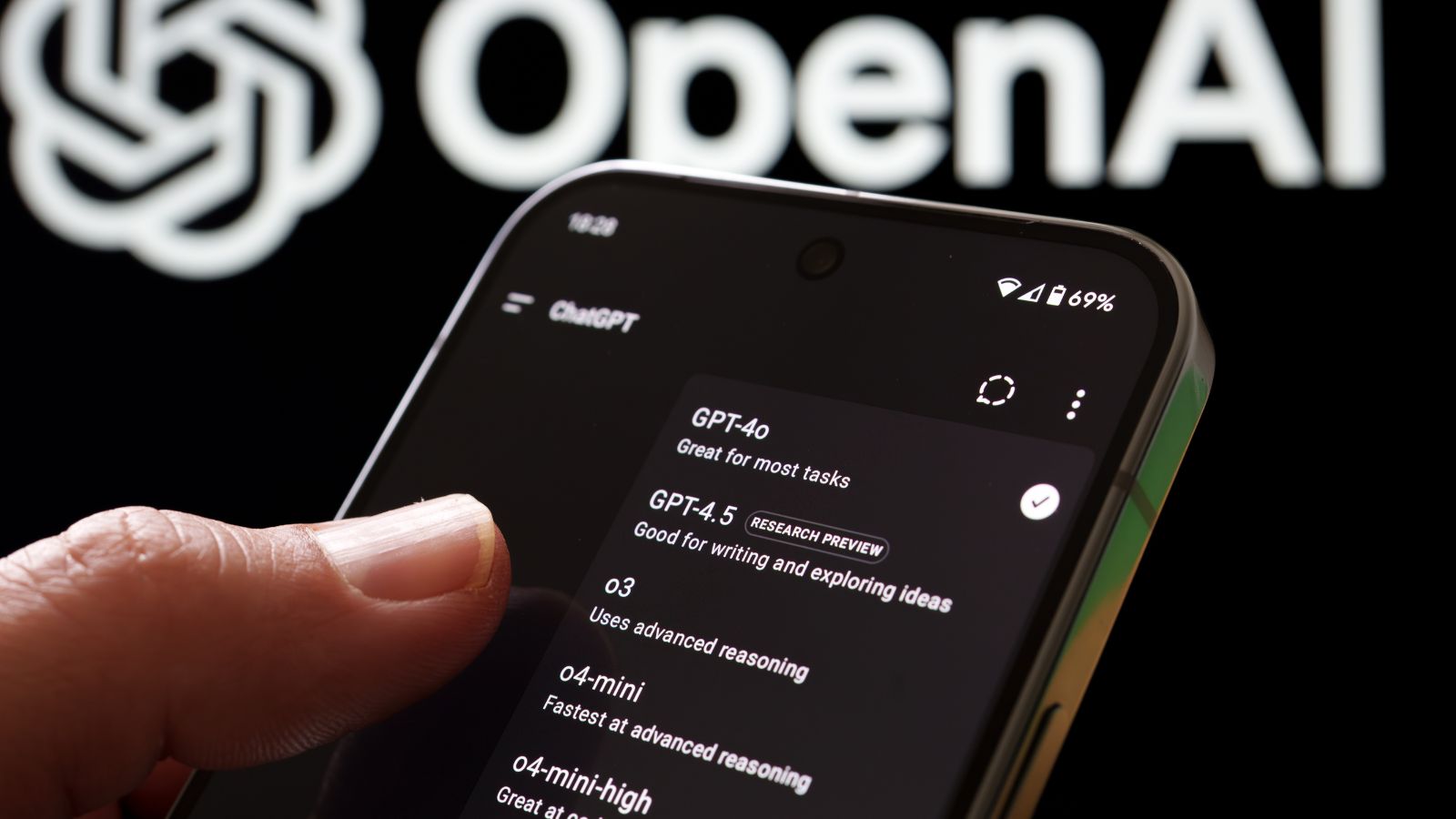

AI is also changing recommendation from a static list into a dialogue. Instead of choosing among thumbnails or headlines, people are beginning to ask systems for something more specific: a lighter comedy than the last movie, a gift for a stubborn parent, a running shoe for narrow feet, a playlist for a late flight. In that model, the next click is not prompted by scanning options alone. It comes after an exchange in which the system narrows, reframes, and interprets the request in real time.

That matters because conversation feels more personal than browsing. OpenAI has rolled out shopping-oriented experiences that build product guides from natural-language requests, and Amazon’s Rufus is designed to answer broad and highly specific shopping questions inside the retail experience itself. Spotify has also moved in this direction by letting users steer its AI DJ with voice prompts. As more platforms adopt conversational recommendation, clicks may shift from reactive taps on visible choices to trust-based responses to AI suggestions that feel closer to advice than search.

Shopping Clicks Could Move Closer to the Checkout

AI recommendation is likely to compress the shopping journey. For years, online buying involved multiple tabs, review sites, price comparisons, and a fair amount of indecision. AI tools promise to shorten that process by bundling discovery, comparison, fit guidance, and purchase timing into one flow. When a system can explain product differences, show likely matches, track prices, and suggest when to buy, the most important click may no longer be “learn more.” It may be “add to cart.”

That shift is already visible in major platforms. Google has promoted AI shopping tools that combine visual inspiration, product guidance, virtual try-on, and agentic checkout features. Amazon has tested “Buy for Me,” which can help customers purchase select products from other brands’ sites through the Amazon app. Salesforce’s retail reporting has also pointed to large amounts of holiday spending influenced by AI and agent systems. In practical terms, AI could reduce the number of exploratory clicks people make while increasing the share of clicks that happen later in the funnel, when purchase intent is already shaped.

Sponsored Suggestions Could Feel More Like Help Than Ads

As AI gets better at understanding intent, advertising may become harder to separate from assistance. The old distinction was easier to spot: an ad looked like an ad, and a recommendation looked like content. AI systems blur that line by generating highly relevant suggestions that appear at the exact moment a person is deciding what to do next. A sponsored result placed inside a conversational response or an AI-curated discovery module may feel less like interruption and more like convenient guidance.

That raises an old consumer-protection problem in a newer form. The FTC has long warned that endorsements and native advertising must be truthful and not misleading, especially when commercial influence is not obvious. At the same time, Google’s AI-powered ad products now aim to widen relevance by matching more search intent and dynamically customizing landing paths. The more precisely AI anticipates what a person is ready to click, the more persuasive sponsored recommendations can become. The challenge will not just be whether ads work better, but whether people can still tell when a helpful suggestion is also a paid nudge.

Autoplay Could Become Even Better at Preventing Exit

One of AI’s quietest powers is reducing friction at the exact moment a person might stop. Autoplay, up-next modules, and endless feed transitions already keep attention moving forward with minimal effort. Add stronger personalization, and the system gets better at choosing the one item most likely to prevent a break. In that environment, the next click becomes less necessary because the platform is increasingly willing to click, queue, or continue on the user’s behalf.

This changes behavior in subtle ways. YouTube openly describes recommendations as central to both its homepage and Up Next panel, and its autoplay feature is explicitly designed to make choosing what comes next easier by automatically playing another video. When these systems are trained on ever-richer behavioral data, they become not just recommendation engines but retention engines. The most important user action may shift from selecting the next item to failing to interrupt what AI has already lined up, which can make passive consumption feel oddly active even when almost no deliberate choice is being made.

Discovery Could Tilt Toward What AI Can Predict, Not What Is New

AI recommendation excels at predicting probable engagement. That sounds useful, but it can also create a structural advantage for content that resembles what has already performed well. Familiar formats, recognizable styles, and reliable engagement patterns are easier for ranking systems to trust than unusual work with uncertain upside. That means the next click may increasingly favor what feels statistically safe instead of what is genuinely fresh, strange, or original.

This concern is especially important for smaller creators and independent publishers. A recommendation engine does not need to dislike originality to disadvantage it; it only needs to rank predicted response above novelty. Research on recommender systems and filter bubbles continues to debate the scale of the problem, but the broader concern is well established: systems trained on past behavior can reinforce existing exposure patterns unless diversity is actively designed into them. In practical terms, audiences may still discover new things through AI, but the path to discovery could become narrower, with fewer accidental clicks on the unexpected and more guided clicks on what resembles prior success.

Source Visits Could Shrink as Summaries Get Better

AI summaries do not have to replace original sources completely to reduce their traffic. They only need to become good enough for routine questions. When a platform presents a concise answer, a short synthesis, or a shopping comparison that seems sufficient, the incentive to click through falls sharply. The source still matters because it trained or informed the answer, but it may no longer receive the same reward in audience, ad impressions, or subscriber conversion.

That tension is now at the center of publisher anxiety. Reuters recently reported that Italy’s media regulator asked the European Commission to investigate Google’s AI search tools over publisher concerns, including the risk that AI-generated answers reduce traffic to original outlets. Pew’s 2025 findings on lower link-click rates in searches with AI summaries reinforce that broader pattern. If this continues, people may come to expect more information without leaving the platform that surfaced it. The click would not disappear entirely, but it would become reserved for verification, controversy, or premium depth rather than everyday curiosity.

Control Tools Could Matter More Than Ever

As recommendation systems get more assertive, the ability to reset or tune them becomes more valuable. In earlier eras, users could often fix a distorted feed by unfollowing a few accounts or clearing browser history. AI systems are more persistent because they build patterns from many signals across time. A few mistaken clicks, a shared device, or a temporary obsession can reshape what appears next for weeks. That makes user controls less like optional settings and more like digital hygiene.

Platforms appear to recognize this. Meta has tested recommendation reset tools for Instagram, specifically acknowledging that people may want a fresh start in Feed, Explore, and Reels. Spotify has also expanded explanations and controls around recommendations. In Europe, the Digital Services Act goes further by requiring very large platforms to offer more transparency around feeds and, in some cases, a non-personalized option. If AI increasingly determines the next click, then the right to interrupt, reset, and understand that process could become one of the most important user protections on the modern web.

AI Could Broaden Discovery for Some People and Narrow It for Others

The future of clicking will not be uniformly narrower. In some cases, AI may expand what people see by connecting them with content they would never have searched for on their own. Recommendation can be liberating when it rescues users from generic popularity rankings and surfaces a strong match from deep in the catalog. Streaming, shopping, and music platforms have long argued that personalization helps people find relevant options inside overwhelming abundance.

Yet the same mechanism can also shrink exposure if it overweights prior behavior. Academic work on filter bubbles and recommendation effects shows a more complicated picture than popular rhetoric suggests. Some studies find evidence of narrowed exposure; others suggest the effects are weaker or more mixed than feared. The important point is that AI can do both, often on the same platform. The next click might become more adventurous for a curious user and more repetitive for someone whose habits are already predictable. The technology does not eliminate choice, but it can subtly rearrange which choices feel easy, visible, or worth exploring.

Context Signals Could Quietly Outrank Explicit Intent

People often think they click because they want something specific. AI systems increasingly act on a broader theory: that context can reveal just as much as declared intent. Time of day, recent activity, location, device type, language, social context, and even how quickly someone is moving through an app can influence what gets shown next. That means a person may believe they are making an independent choice while reacting to a version of the interface that has already been reshaped around situational cues.

This is part of what makes modern recommendation feel uncanny. The system is not only asking what content matches a topic; it is estimating what kind of suggestion is most clickable at that exact moment. A commuter may see shorter videos, a late-night shopper may get more urgent recommendations, and a weekend browser may receive inspiration-oriented prompts instead of practical ones. As models get better at reading context, the next click may be determined less by broad identity categories and more by momentary states that are inferred and acted upon before the person fully notices them.

Trust Signals Could Decide More Clicks Than Brand Names

In a more crowded AI-mediated web, trust may become a decisive ranking factor. When summaries, feeds, and conversational agents compress information, people have less time to inspect a source closely. They may rely instead on quick trust cues: labels, reputation snippets, review density, recognizable publishers, expert framing, or signs that content is original rather than synthetic. In other words, AI may not eliminate brand power, but it could shift trust from slow evaluation to fast heuristics attached to whatever appears next.

Platforms are already responding to this pressure. Meta has described efforts to label certain AI-generated images and manipulated media more clearly across its apps. That is partly a misinformation issue, but it is also a discovery issue: once users suspect that some content is synthetic or context-poor, labels become part of the clicking decision itself. Over time, the next click may go not merely to the most relevant suggestion, but to the suggestion that feels safest to trust at speed. In an AI-heavy environment, visible provenance may become as influential as relevance.

Bundled Results Could Replace Serial Browsing

For years, the web trained people to compare by opening many tabs. AI is pushing toward a different habit: letting the system cluster, summarize, and organize possibilities before any tab opens at all. That changes what a click means. Instead of moving from result to result, users may increasingly click into a pre-grouped recommendation set that already contains the major tradeoffs, alternatives, and explanations. The click becomes an entry into a decision package rather than a step in a long comparison trail.

Netflix’s work on recommendations and results organization points to this broader logic even outside classic web search. Search systems do not merely retrieve matches anymore; they organize likely intent. Shopping experiences are moving the same way, with AI-generated buyer guides and grouped comparisons replacing some of the messy work users used to perform manually. This can save time, but it also shifts power toward whichever system defines the comparison frame first. The next click may still feel open-ended, while in reality the menu has already been narrowed, interpreted, and ordered by an AI layer.

AI Agents Could Start Clicking for People

The most dramatic change may come when recommendation stops at neither suggestion nor summary. AI agents are beginning to act. Instead of telling users what to click, some systems are being built to conduct research, track prices, complete checkouts, or execute tasks once approval is given. In that world, the next click may belong to software rather than to the person sitting at the screen. Human choice remains, but it becomes supervisory rather than hands-on.

The early signs are already visible. Google has promoted agentic checkout in shopping experiences, and Amazon’s Buy for Me feature is explicitly designed to help people purchase select products from other brands through Amazon’s own interface. OpenAI has also described shopping research and instant-checkout directions that point toward agentic commerce. Once these systems mature, people may click less often not because they are less engaged, but because they have delegated the tedious parts of browsing. The web would still revolve around decisions, but many intermediary clicks could disappear into automated action.

Regulation Could Reshape What “Recommended” Means

The future of clicking will not be determined by technology alone. Regulation is starting to influence how recommendation systems must operate, explain themselves, and offer alternatives. In Europe especially, the policy direction is clear: users should have more transparency about why content appears in feeds and more meaningful options to opt out of some forms of personalization. That may sound procedural, but it could change interface design in concrete ways.

The Digital Services Act requires greater transparency and control over feeds, including information about the basis on which content is ranked and, for very large platforms, options related to personalized recommendations. Regulators are also testing whether platform interfaces make it too difficult to choose non-personalized experiences. If those pressures continue, the next click may increasingly be shaped by a new tension between optimization and accountability. AI will still predict what people are most likely to choose, but platforms may be forced to reveal more about that prediction and give users more chances to reject it.

The Biggest Shift May Be Psychological

The deepest change may not be technical at all. It may be the gradual normalization of being guided. When AI becomes good at anticipating interests, smoothing decisions, and reducing uncertainty, people may stop noticing how often the path ahead has been arranged for them. Clicking will still feel voluntary, but the experience surrounding it may become more curated, more predictive, and less exploratory than the open web once encouraged.

That does not make the change automatically bad. Plenty of people genuinely want less friction, fewer tabs, better suggestions, and faster decisions. But convenience has a habit of hiding tradeoffs. If AI determines more of what gets surfaced next, then curiosity itself may start to operate differently. People may browse less widely, compare less manually, and trust platform-mediated answers more quickly. The next click will remain a tiny action, but it could say more than ever about who controls attention: the individual making a choice, or the system that quietly shaped the menu first.

19 Things Canadians Don’t Realize the CRA Can See About Their Online Income

Earning money online feels simple and informal for many Canadians. Freelancing, selling products, and digital services often start as side projects. The problem appears at tax time. Many people underestimate how much information the CRA can access. Online platforms, banks, and payment processors create detailed records automatically. These records do not disappear once money hits an account. Small gaps in reporting add up quickly.

Here are 19 things Canadians don’t realize the CRA can see about their online income.