The old scam cliché used to be obvious: bad grammar, strange formatting, and a story that fell apart after two questions. That version is fading. Cheap generative tools can now clone a voice, polish a fake website, script a believable chat, and turn an ordinary social media post into a tailored con. Regulators and investigators have spent the last two years warning that AI is not creating an entirely new criminal world so much as making familiar fraud feel alarmingly normal.

What follows are 18 scam patterns that have become harder to spot precisely because they borrow the tone, speed, and realism of legitimate digital life. Some target households, others target workplaces, and a few do both. Together, they show how deception has become less clumsy and far more convincing.

Voice-Cloned Family Emergencies

One of the most unsettling AI scams is also one of the simplest. A scammer does not need a studio session or a long recording to imitate a loved one anymore. Consumer watchdogs have warned that a short audio clip pulled from social media can be enough to clone a voice well enough to trigger panic, especially when the call opens with fear instead of detail. The setup is brutally efficient: a sobbing voice, a car crash, an arrest, a hospital, a demand for secrecy, then a request for money or account access before anyone has time to think.

What makes this scam harder to spot is not technical perfection but emotional timing. A parent or grandparent who hears what sounds like a child in distress often reacts first and verifies later. That is exactly the gap scammers exploit. The cloned voice does not need to survive a forensic test; it only needs to sound plausible for thirty seconds. In practice, that is often enough to overpower the old instinct that something feels “off,” especially when the caller already knows names, family roles, or recent events taken from public posts.

Deepfake Boss Payment Requests

Corporate fraud has always depended on authority, and AI has made authority easier to counterfeit. The modern version is no longer just a spoofed email from a chief executive. It can be a voice message, a video call, or a group meeting where the faces on screen appear familiar enough to lower resistance. In one of the clearest examples yet, a finance employee at Arup was reportedly tricked into transferring roughly HK$200 million after a deepfake video call that appeared to include senior colleagues. That case landed with such force because it showed how quickly internal trust can be weaponized.

The danger here is not only the fake face or cloned voice. It is the way AI layers realism onto a standard business-pressure scenario: confidential transfer, tight deadline, unusual urgency, no time to run it through the usual chain. Add the wider rise in AI-enhanced email and social engineering, and the scam becomes harder to separate from legitimate executive behavior, especially in remote workplaces where rushed approvals are already common. The result is a fraud that looks less like hacking and more like a normal workday taking a catastrophic turn.

Government Impostors With Synthetic Voices

Impersonation scams used to rely on callers sounding authoritative. Now they can sound familiar, specific, and unnervingly well briefed. The FBI warned in 2025 that malicious actors were impersonating senior U.S. officials through text messages and AI-generated voice messages, often targeting current or former public officials and then pivoting the conversation onto secondary encrypted platforms. That is an important shift. The goal is not simply to scare someone with a fake badge; it is to use realistic communication to build trust long enough to steal credentials, money, or contact networks.

This is why the scam keeps getting harder to recognize. The message may reference a topic the target genuinely knows, arrive from a spoofed number, and use a tone that feels more polished than the average fraud attempt. By the time the victim realizes the conversation has drifted into manipulation, the attacker may already have established credibility. These scams also teach a broader lesson about AI fraud: convincing language is sometimes more dangerous than dramatic theatrics. A caller who sounds calm, informed, and slightly busy can do far more damage than one who sounds obviously fake.

Celebrity Investment Endorsements That Never Happened

Deepfake celebrity scams thrive because fame still functions as shorthand for trust. A recognizable face in a social ad or video clip can make a fraudulent scheme feel pre-vetted before any facts are checked. Regulators in Australia and New Zealand have repeatedly warned that scammers are using celebrity images, fake news pages, and deepfake interview footage to push bogus investment platforms. The mechanics are familiar by now: an ad appears to show a well-known figure discussing easy profits, the click leads to a site dressed up like a real news outlet, and the “opportunity” quickly turns into account registration, a starter deposit, and relentless follow-up.

The psychological trick is remarkably old-fashioned. The celebrity is not there to explain the investment; the celebrity is there to make people skip the step where they would normally ask whether the company is real. Once that skip happens, everything downstream gets easier. Victims are more willing to believe the broker, the dashboard, the follow-up call, and even the fake early gain. Regulators have responded with takedowns by the hundreds, but the underlying problem remains: AI has made it cheap to manufacture the kind of social proof that once took real advertising budgets and real public figures.

“AI Trading Bot” Wealth Pitches

Some scams do not just use AI behind the scenes; they sell AI itself as the fantasy. These pitches promise that a bot, model, or automated trading engine can quietly generate passive income while the victim sleeps. The language is usually hyper-modern and oddly frictionless: no coding, no stress, no learning curve, just intelligent automation doing the work of a professional trader. Australia’s securities regulator has warned that scammers are increasingly using polished videos, fake endorsements, and targeted social ads to sell exactly this kind of fantasy, often wrapping old investment fraud in the vocabulary of machine intelligence.

That framing matters because it flatters the victim rather than frightening them. Instead of saying “act now or lose everything,” the scam says, in effect, “smart people are using the future already.” It feels aspirational, not desperate. That makes skepticism harder to sustain. The hook is often small at first, maybe a form submission or a modest initial deposit, but the sales pitch gets more aggressive once contact details are captured. AI becomes the perfect buzzword for these schemes because it sounds technical enough to discourage questions and exciting enough to suspend common sense.

Romance Scams With Synthetic Charm

Romance scams have always depended on patience, but AI has made patience easier to fake. Generative tools can help scammers produce polished first messages, maintain a consistent tone across long conversations, and quickly adapt to a target’s interests, profession, or mood. The result is not just more messages; it is better messages. The FTC reported that nearly 60% of people who said they lost money to romance scams in 2025 said the scheme started on social media. Around the same time, McAfee’s 2026 research found that one in seven American adults said they had lost money to an online dating or romance scam.

The most striking detail is not simply the money. It is how natural the interaction can feel before the financial pivot arrives. McAfee said its labs saw fake AI dating bots surge, with some users receiving more than 60 messages in 12 hours, sometimes even when they had no profile photo. That hints at the scale problem: fraud no longer needs a patient human operator for every early-stage conversation. Once attention is captured, the scam can move into its familiar shape—fast intimacy, private messaging apps, an emergency, an investment tip, or both. The script is old, but the delivery is smoother than ever.

Fake Profit Dashboards and Recovery Traps

One of the cruelest AI-era scams is the one that rewards trust before stealing it. Regulators keep describing the same pattern: a victim is shown a professional-looking dashboard, sees apparent gains, and may even be allowed to withdraw a small amount. That small success is not generosity; it is design. It turns suspicion into commitment. The FTC has warned that fake investment platforms on social media often display false profits for exactly this reason, while New Zealand’s Financial Markets Authority has described schemes where victims are first nudged into a modest deposit and later blocked from withdrawing unless they pay more fees.

The cruelty deepens after the first loss. Some victims are contacted again by supposed recovery specialists who promise to trace or retrieve the missing funds for yet another fee. By that point, people are not just financially exposed; they are emotionally primed to believe that one more payment might fix the mistake. The FBI’s Operation Level Up found that many victims of crypto investment fraud did not even realize they were being scammed when contacted by investigators. That says a lot about the modern fraud environment. The fake interface is no longer a flimsy prop. It can feel like evidence.

Recruiters Who Seem Exceptionally Professional

Employment scams no longer have to sound sloppy to sound suspicious. That is part of the problem. The FTC has warned repeatedly that job scammers want money, personal information, or both, and they increasingly reach people through the same channels legitimate employers use: job platforms, ads, texts, and social media. What changes in the AI era is the finish. Recruiter outreach can now be unusually well written, correctly formatted, and tailored to the candidate’s field in a way that once required more effort than many scammers were willing to spend.

That broader polish matters because job searching already forces people to normalize cold outreach, fast follow-ups, and requests for personal details. A scammer does not need to create a perfect company to succeed; they only need to look as organized as a busy employer. The wider cyber landscape helps explain why this works. Microsoft has reported that AI-automated phishing emails achieved dramatically higher click-through rates than standard attempts, which suggests that cleaner language and better personalization genuinely change user behavior. In a hiring scam, that translates into fake interviews, bogus onboarding forms, or requests for payment that arrive wrapped in the tone of opportunity rather than obvious fraud.

Task Scams Disguised as Easy Remote Work

Task scams are a sharp example of how fraud has learned to copy platform design instead of just copying language. The FTC describes them as game-like schemes in which people are told to complete simple online tasks—liking videos, rating product images, or “optimizing” items in an app—while a screen shows commissions accumulating in real time. Sets of forty, level-ups, bonus tasks, and small early payouts all make the fake job feel interactive and measurable. Reported losses to job scams more than tripled from 2020 to 2023, and in just the first half of 2024, reported losses topped $220 million.

The genius of the scam is that it turns exploitation into progress. Victims are not merely asked for money out of nowhere; they are told they need to deposit funds to unlock higher earnings, finish a task set, or withdraw what appears to already belong to them. That structure feels less like theft than a temporary inconvenience inside a functioning system. It is also perfectly matched to AI-assisted persuasion. The chat support, motivational prompts, and micro-explanations can all be generated at scale, making the fake workplace feel attentive, responsive, and alive long enough to keep people paying into it.

Phishing Messages That No Longer Look Phishy

For years, people were told to spot phishing by looking for clumsy grammar, awkward greetings, and formatting errors. That advice is aging badly. Microsoft’s 2025 Digital Defense Report said AI-automated phishing emails achieved a 54% click-through rate compared with 12% for standard attempts, a gap large enough to explain why older detection habits are failing. Around the same time, Anthropic said it had blocked attempts to misuse Claude for cybercrime, including drafting phishing emails. In other words, the threat is no longer hypothetical. Major AI companies and major defenders are documenting the same shift from opposite sides.

What changes first is tone. The email sounds closer to how a real colleague, recruiter, bank, or software vendor would write. It is less theatrical, less broken, and more context aware. That does not mean every AI-crafted phishing attempt is brilliant. It means the median scam message is now less likely to self-destruct before the victim even reaches the link. Once the language barrier drops, people have to rely more on process than instinct: checking the sender through a separate channel, visiting the real website directly, and distrusting urgency even when the wording feels polished enough to pass a casual glance.

Customer Support Agents Who Were Never Real

Fake customer support has become one of the most adaptable scam categories online because it can attach itself to almost any moment of confusion. Google said in 2025 that customer support scams were evolving, with fraudsters impersonating legitimate support channels while exploiting social engineering and web vulnerabilities to display fake phone numbers. That matters because the scam no longer begins with an obviously shady site. It can begin when someone is already looking for help with a real company, which means their guard is lower and their willingness to follow instructions is higher.

Once contact is made, the fake support flow often feels eerily normal. There may be a case number, calm step-by-step instructions, a plausible hold message, and a request to verify information that sounds routine. This is where AI improves the scam’s texture. It can help generate consistent scripts, faster responses, and more natural-sounding explanations across thousands of interactions. The victim is not persuaded by one giant lie; they are guided through a series of tiny moments that each feel legitimate. By the time remote access, card details, or account codes are requested, the fraudulent relationship already feels established.

Tech Support Pop-Ups Built to Panic

The classic tech support scam is a fake security warning that tells a user the computer is infected and demands an immediate call. That formula still works because fear compresses judgment. The FTC continues to warn that legitimate companies will not contact people to say there is a problem with their device and that real security warnings will not tell them to call a number. Google has added another disturbing layer to the picture: tech support scam pages can use full-screen takeovers and even disable keyboard and mouse input to heighten the sense of crisis.

That behavior shows why these scams remain effective. The pop-up is not only pretending to be security software; it is trying to create the bodily experience of losing control. Once panic sets in, the fake phone number becomes an escape hatch. Google’s security team has said the average malicious site it encounters in this category exists for less than ten minutes, which helps explain why static blocklists struggle to keep up. The scam is fleeting, theatrical, and disposable. It does not need a long shelf life. It only needs a short burst of authority and panic to turn a browser tab into a cash machine.

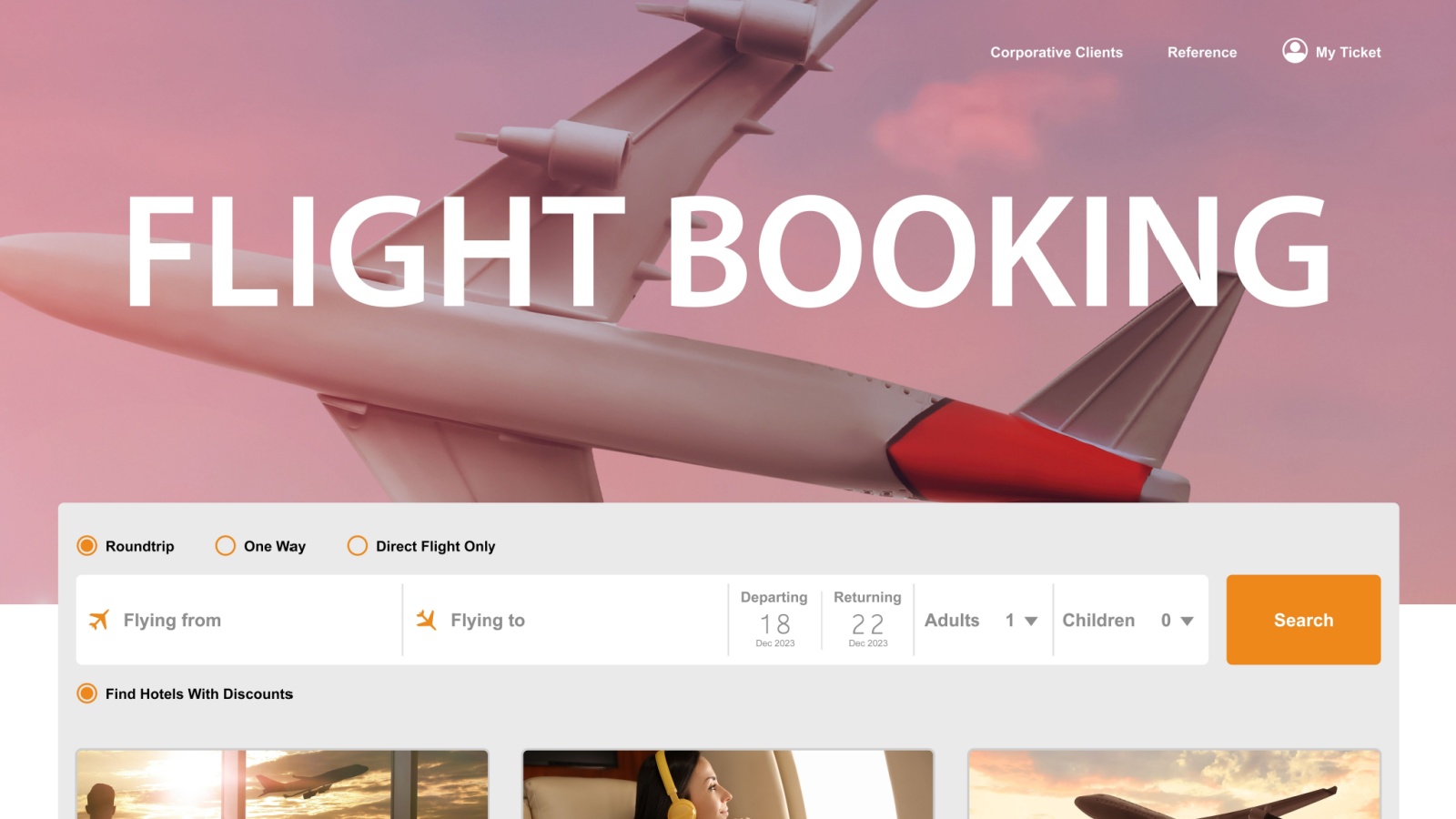

Travel Booking Sites That Copy the Real Thing

Travel scams work best when people are already rushing, comparing prices, and making decisions on small screens. Google said in 2025 that it had observed a spike in fake travel websites ahead of the vacation season, with deceptive sites imitating well-known hotels or posing as legitimate travel agencies. The bait is predictable but effective: impossible discounts, exclusive packages, last-minute urgency, or event-driven scarcity. Messaging apps and phone calls often enter the picture too, especially when the scammer wants to move the victim away from the booking platform and into a channel where pressure is easier to apply.

What makes these scams especially difficult to catch is how closely they now mimic ordinary travel friction. Real bookings involve third-party agents, mobile confirmations, follow-up calls, fees, and constant price fluctuation. Fraud blends into that noise. AI raises the ceiling on how professional the fake site and follow-up messages can look, but the deeper advantage is contextual. Travelers expect complexity, and scammers hide inside it. A slightly off domain, a strangely generous deal, or a request to pay outside the main platform can all be explained away in the moment as quirks of the industry rather than signs of a trap.

Rental Listings With Polished Backstories

Housing fraud has always preyed on urgency, but AI-era rental scams are better at sounding organized rather than desperate. The FTC says scammers create fake rental listings by copying legitimate ads, changing the contact details, or building entirely new listings with attractive photos and below-market rents. In the 12 months ending June 2025, about half of people who reported a rental scam said it started with a fake ad on Facebook, and adults ages 18 to 29 were three times more likely than others to report losing money to this type of scam.

The old warning sign was often an obviously strange message from an implausible landlord. That is less dependable now. Generative tools make it easier to produce cleaner property descriptions, calmer email exchanges, and believable excuses about why a viewing cannot happen yet. Even when the pictures are stolen rather than AI-made, the surrounding story can feel far more coherent. That is the real danger: the scam no longer depends on one glaring mistake. It survives by removing the small bits of sloppiness that used to make renters pause before sending deposits, application fees, or identity documents.

Social Shopping Ads and Clone Storefronts

Shopping scams remain one of the clearest examples of AI making old fraud feel modern. The FTC reported that in 2025, more than 40% of people who lost money to a scam on social media said it started when they ordered something from an ad. Many landed on unfamiliar sites, while others were pushed toward pages impersonating well-known brands with steep discounts. Most said the product never arrived. When it did, it was often counterfeit or dramatically different from what was advertised. That should sound familiar, yet the scale and polish are what have changed.

Security researchers have been documenting the next layer: fake storefronts that increasingly use AI-generated text, imagery, and entire site structures to look more convincing. That matters because social shopping already conditions people to make split-second decisions based on aesthetics. A sleek product page, a believable return policy, and a cluster of plausible reviews can do a lot of persuasive work before the victim ever checks the domain name. In other words, AI does not have to invent a new retail scam. It simply helps fraudsters mass-produce the surface details that make an illegitimate store feel like a normal late-night impulse purchase.

Sextortion With Fabricated Images

Sextortion is terrifying because it exploits shame faster than facts. The FBI has warned of a huge increase in sextortion cases involving children and teens, and security researchers have noted that scammers are now using AI-generated or AI-altered explicit images to intensify that pressure. Instead of waiting for a victim to send intimate material, a criminal can fabricate something that looks real enough to threaten with. That changes the emotional equation. The target is no longer reacting only to what they may have shared. They are reacting to what others might believe they shared.

The scam works by collapsing time. A message arrives claiming the image will be sent to family, classmates, coworkers, or followers unless money is paid immediately. The demand may be small at first, but compliance usually invites more demands, not resolution. AI makes this version harder to spot because the victim’s first instinct is often not to analyze the image but to imagine the fallout if it spreads. The fraudster counts on that panic. In the most dangerous cases, the humiliation is the product. Money is simply the first thing extracted from a victim whose fear has already been weaponized.

Invoices, Vendor Changes, and Other Synthetic Paper Trails

Not all AI scams look flashy. Some look like paperwork, which is exactly why they work. The latest invoice fraud schemes aim at finance teams, administrators, and business owners who process routine requests all day long. ICAEW reported in 2026 that AI helps criminals create convincing invoices at scale, while research it cited found that 44% of businesses had been targeted by invoice fraud and 53% of finance professionals had seen attempted deepfake scamming attacks. Once a false invoice or vendor-change request arrives in the normal flow of business, it can pass as ordinary unless someone stops to verify it independently.

That risk grows when synthetic documents are combined with cloned voices or believable email threads. Deloitte has warned that business email compromise is already one of the costliest categories of fraud and that generative AI can let bad actors scale these attacks more cheaply and more broadly. The deeper lesson is that realism is no longer confined to audio and video. The spreadsheet, the PDF, the “updated banking details” email, and the polite follow-up call can all support one another. Fraud is becoming less like a single forged message and more like an entire forged workflow.

Deepfake Selfies and Account Takeover Fraud

The most advanced AI scams do not just imitate people to ask for money; they imitate people to get past security. FINRA has warned that some firms now use selfie photos or videos as part of customer verification and that fraudsters can take images from social media to build deepfakes capable of bypassing those checks. The same regulator has also flagged false identification documents, deepfake audio and video, and imposter sites as part of the emerging threat mix. That means the scam is no longer only about persuading the victim. Sometimes it is about persuading the system.

This is why AI fraud feels qualitatively different from many earlier scams. It attacks trust at two levels at once. First, it can fool the human target through familiar social engineering. Second, it can attempt to fool the technical safeguards designed to catch impostors in the first place. For consumers, that can look like account takeover, fraudulent brokerage activity, or the opening of fake accounts in someone else’s name. For institutions, it raises an uncomfortable truth: identity checks that once felt modern can quickly become outdated when criminals can manufacture a version of a face that looks “real enough” to a machine.

19 Things Canadians Don’t Realize the CRA Can See About Their Online Income

Earning money online feels simple and informal for many Canadians. Freelancing, selling products, and digital services often start as side projects. The problem appears at tax time. Many people underestimate how much information the CRA can access. Online platforms, banks, and payment processors create detailed records automatically. These records do not disappear once money hits an account. Small gaps in reporting add up quickly.

Here are 19 things Canadians don’t realize the CRA can see about their online income.