The sales pitch around artificial intelligence was simple: faster answers, less friction, fewer tedious tasks, and a smoother digital life. Instead, many of the tools that arrived with the biggest promises have also introduced a new layer of hassle. Convenience often came bundled with uncertainty, impersonality, extra verification, and a growing need to double-check what machines confidently produce.

These 18 examples capture where that tension shows up most clearly. Some involve work, some shopping, some communication, and some basic trust online. Together, they reveal a pattern that has become hard to miss: AI has made many everyday systems more powerful, but not always more pleasant.

Search Results That Now Need More Second-Guessing

Search was supposed to become the easiest beneficiary of AI. Instead of clicking through a stack of links, people were promised instant summaries, direct answers, and less digging. In practice, that convenience has introduced a new burden: figuring out whether a polished answer is actually dependable. AI-generated overviews can compress a topic quickly, but they also flatten nuance, miss context, and sometimes present uncertain information with remarkable confidence.

That shift matters because search used to make its process more visible. A user could scan several sources, compare headlines, and judge credibility. Now the first response often arrives already blended and paraphrased, which can save time while reducing transparency. Research has already shown that users click through to traditional links less often when AI summaries appear, and public opinion on those summaries is mixed rather than enthusiastic. The result is a strange tradeoff: less scrolling, but more mental vigilance.

Customer Service That Sounds Smoother but Feels Harder to Escape

AI customer service was supposed to eliminate hold music, speed up resolutions, and answer routine questions at any hour. It has helped with some basic tasks, but it has also created a familiar new irritation: polished conversational systems that keep a problem moving without actually solving it. Many people now recognize the feeling of being routed through a fluent digital helper that apologizes elegantly, repeats the issue, and still cannot fix a billing mistake or make a judgment call.

Part of the annoyance comes from the mismatch between tone and capability. Earlier automated systems sounded obviously robotic, so expectations stayed low. Newer ones sound more human, which makes failure more frustrating. Companies like them because they can handle volume, but even industry discussions still point to empathy and edge cases as major limits. When AI is good enough to keep a person talking, but not good enough to resolve the problem, it does not remove friction. It simply makes the friction more conversational.

Scam Messages That Became More Persuasive

One of the most immediate ways AI made life more annoying is also one of the most worrying: fraud got better. Spam emails, phishing texts, and fake calls used to be easier to spot because they were often clumsy. Bad grammar, odd formatting, and awkward phrasing gave scammers away. Generative AI has helped remove some of those tells, allowing criminals to produce cleaner copy, more personalized messages, and even cloned voices that sound disturbingly real.

That means ordinary caution now has to work harder. A call that sounds like a relative in trouble can be fake. A perfectly written email about a missed package or banking alert can be fraudulent. Regulators in the United States have already responded by declaring AI-generated voices in robocalls illegal, and consumer agencies have warned that only a short audio clip can be enough to imitate someone. The annoyance is not just the volume of scams. It is the new need to distrust messages that no longer look obviously suspicious at first glance.

Job Applications That Turned Into an Arms Race

Hiring was often framed as a place where AI would reduce bias, save time, and help employers spot strong candidates more efficiently. Instead, it has also turned applications into an exhausting contest of optimization. Candidates use AI to rewrite résumés, draft cover letters, and tailor keywords. Employers use AI to filter those materials, score applicants, and narrow huge pools. The process became faster, but not necessarily clearer or more humane.

That escalation has made applying for jobs feel more artificial on both sides. Applicants worry about being screened out by systems they do not understand, while employers worry about floods of polished but interchangeable submissions. U.S. equal employment authorities have repeatedly warned that automated selection tools can create adverse impact and still fall under anti-discrimination law. The annoyance for ordinary workers is practical and emotional at once: more applications can be produced in less time, yet each one can feel less personal, less trustworthy, and more likely to disappear into an invisible scoring system.

Email That Became Easier to Write and Harder to Read

AI was supposed to rescue inboxes by drafting replies, summarizing threads, and reducing writing fatigue. It has done that in part, but it has also helped create more email. When messages are easier to generate, more of them get sent, and many arrive polished enough to seem important even when they say very little. The burden shifts from composing to triaging.

That is why AI can make communication feel efficient and bloated at the same time. Workplace research from Microsoft has shown just how widespread generative AI use has become among knowledge workers, particularly in environments already strained by information overload. The result is a new kind of inbox fatigue: longer, smoother, more structured messages that still require a human to decide what matters. Instead of reducing digital clutter, AI often upgrades its presentation. The wording improves, the tone softens, the summaries multiply, and the reader still ends up doing the same difficult work of deciding what is urgent, authentic, and worth attention.

Meeting Notes That Are Faster but Not Always Faithful

Automatic transcription and note generation sounded like an obvious improvement. No more scrambling to capture action items, no more missed details, and no more one person stuck taking notes while everyone else talks. In many settings, AI note tools really do help. But they also introduce a quieter annoyance: people often have to review, edit, and correct the record afterward because the summary can sound authoritative while subtly misrepresenting what was actually said.

This is especially frustrating in meetings where tone, hesitation, disagreement, or unfinished ideas matter. A system may produce a neat list of decisions even when the group never truly reached one. It may flatten nuance, mishear names, or turn tentative suggestions into official commitments. In health care and business alike, newer “ambient” documentation tools are being adopted because they reduce burdens, yet experts still emphasize oversight, consent, and correction. The annoyance is easy to recognize: AI removed the scramble of note-taking, but replaced it with a different chore—auditing the machine’s version of reality.

Schoolwork That Became Simpler to Produce and Harder to Trust

Education was expected to benefit from AI through tutoring, instant feedback, and more personalized learning support. Those opportunities are real, but classrooms also became more tense. Teachers now have to wonder whether polished assignments reflect real understanding. Students have to decide when assistance becomes substitution. Even strong work can draw suspicion simply because it looks too clean, too structured, or too fast.

That tension has made learning more complicated, not less. UNESCO has warned that generative AI in education brings major risks around reliability, bias, and governance, even as institutions experiment with productive uses. The problem is not only cheating in the narrow sense. It is the erosion of confidence around what effort looks like. When a student can get an outline, summary, explanation, and draft in seconds, the line between support and outsourcing becomes blurry. That leaves schools managing not just a new tool, but a new atmosphere of doubt—one where convenience has made trust more fragile.

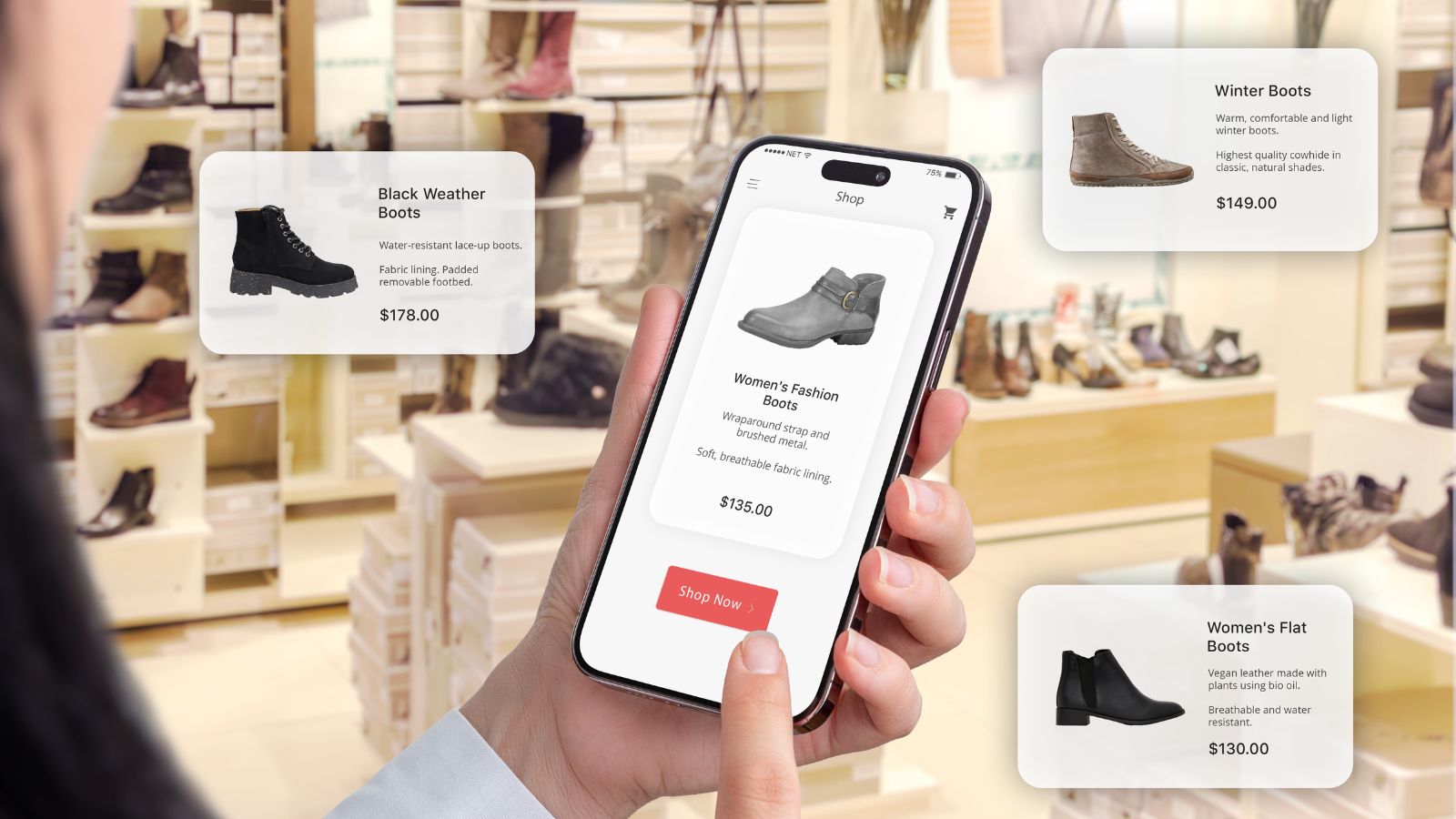

Shopping Recommendations That Feel Personal but Not Necessarily Helpful

AI recommendations were sold as a way to cut through clutter. Instead of browsing endlessly, shoppers would see products matched to their habits, budget, and preferences. That does happen, but the experience can also feel like being funneled into a narrow loop. A few clicks on one item can reshape an entire feed, and the resulting suggestions often reflect what a system thinks will convert, not what a person actually needs.

The situation gets worse when recommendations sit next to questionable reviews, sponsored rankings, or synthetic product content. Regulators have become concerned enough about fake and deceptive reviews that the U.S. Federal Trade Commission finalized a rule banning their sale and purchase. Meanwhile, survey work shows that large shares of the public already recognize AI as part of online shopping recommendations. The annoyance is subtle but persistent: shopping becomes more “personalized,” yet often less exploratory, less trustworthy, and more repetitive. Instead of making choice easier, AI can make the digital shelf feel like a hall of mirrors.

Help Centers That Answer Quickly but Still Miss the Point

Tech support, insurance portals, travel sites, and utility companies increasingly rely on AI-generated help systems to answer routine questions. In theory, that should be a win for everyone. In practice, many people now encounter answers that are fast, fluent, and maddeningly incomplete. A chatbot may summarize policy language, point to a general help page, or restate a problem using friendlier wording without actually addressing the exact issue at hand.

That creates a particular kind of modern annoyance: the experience of being kept busy rather than being helped. The user is offered steps, suggestions, and links, but still ends up hunting for a live agent or digging through original documentation anyway. Because the interface sounds confident, the wasted time can feel even more aggravating. AI did remove some dead ends, but it also created a new maze—one made of competent-sounding approximations. For many basic consumer problems, the answer is no longer unavailable. It is available everywhere except in the form that actually resolves the case.

Coding That Got Faster While Review Got Heavier

AI coding tools genuinely help many developers move faster. They can generate boilerplate, suggest completions, draft tests, and reduce repetitive work. That is why adoption has been so rapid. But the gain in speed has come with a familiar catch: someone still has to inspect the code carefully. A function that appears elegant can still be insecure, brittle, redundant, or subtly wrong.

That has changed the shape of programming work rather than simply shrinking it. Research from GitHub and later studies on workplace coding tools found measurable productivity and satisfaction gains, yet they also left intact the need for scrutiny and trust checks. Developers may spend less time staring at a blank screen and more time evaluating whether the machine’s output belongs in a real product. For teams under deadline pressure, that can feel like swapping one annoyance for another. The tedium of writing every line is reduced, but the cognitive strain of reviewing plausible-looking code grows more central.

News Consumption That Became Faster and Foggier

AI promised to help people keep up with the news by summarizing long reports, condensing developing stories, and translating complex topics into plain language. It has done all of that. It has also contributed to a more confusing information environment, where summaries circulate faster than source material and the line between synthesis, distortion, and fabrication is harder to see.

That matters because many readers already struggle with overload. A concise AI summary can feel useful in the moment, but it also discourages click-through and source comparison. At the same time, watchdog groups and regulators have been tracking how easily AI systems can amplify or repeat false claims. This creates a double annoyance. First, there is too much information. Then there is extra work required to verify which digest, clip, or rewritten headline deserves trust. AI did make news easier to consume in fragments. It also made it easier for fragments to outrun context.

Translation That Became More Available but Less Nuanced

AI translation has made multilingual communication faster and more accessible than ever. Menus, emails, instructions, and conversations can be rendered almost instantly, and that is undeniably useful. But the added convenience often arrives with a new headache: translations that seem polished while quietly losing tone, intent, cultural context, or key distinctions. That problem becomes more serious in low-resource languages, specialized domains, or emotionally loaded conversations.

Research on multilingual language models continues to show that hallucinations and context failures remain a persistent issue, and UNESCO has repeatedly warned that AI systems can reproduce bias and misinformation across educational and cultural settings. For everyday users, the annoyance is not that translation fails completely. It is that it succeeds just well enough to invite overconfidence. A phrase can look natural while carrying the wrong implication. A customer support exchange can be grammatically fine but practically misleading. AI has made translation available everywhere, but not always with the reliability people assume from its fluency.

Medical Paperwork That May Save Time but Add New Friction

Health care is full of administrative burden, so AI seemed destined to be welcomed there. Documentation tools, automated summaries, and claims processing systems have indeed shown promise in reducing clerical strain. Many physicians say administrative relief is one of AI’s clearest early benefits. Yet patients and clinicians are also discovering the other side of that shift: more automation can mean more distance, more opacity, and new opportunities for error or unfair denial.

The friction becomes especially visible when AI is involved in decisions that affect access to care or the way a visit is documented. The American Medical Association has warned about unregulated AI tools in prior authorization, and physicians have expressed concern that automated systems could increase denials or override sound clinical judgment. Meanwhile, WHO guidance stresses caution because generative systems can make mistakes that appear convincing. So even where AI helps remove paperwork, it can replace one type of burden with another: the burden of checking whether a tidy machine process is also a just and accurate one.

Voice Systems That Sound More Human While Remaining Just as Limiting

The old automated phone tree was annoying because it was obviously mechanical. The new AI voice system is annoying for a different reason: it sounds more natural, so people expect more from it. When a system can respond conversationally, recognize interruptions, and mimic the rhythm of a real person, it creates the impression that flexibility has arrived. But often the boundaries are still rigid, and the path to a human remains frustratingly narrow.

At the same time, AI-generated voices introduced a separate layer of distrust. U.S. regulators moved to make AI-generated robocall voices illegal after voice-cloning scams and political misuse raised alarm. That means voice technology now carries two annoyances at once. It can feel too human when it is merely scripted, and too suspicious when it might be fake. A phone call that should feel like the most direct form of communication now asks for more scrutiny than it did before. AI improved the surface realism of voice systems without fully restoring confidence or control.

Photo Editing That Made Every Image Slightly More Debatable

AI image tools have made editing astonishingly easy. Unwanted objects vanish, skies improve, faces sharpen, and old photos can be restored in seconds. For everyday users, that is impressive and often fun. But there is an obvious downside: every image now carries a little more uncertainty. Even ordinary photos can prompt the question of what was altered, cleaned up, invented, or staged by software.

That creeping doubt is one reason major efforts around content credentials and provenance have gathered momentum. Adobe and its partners have been pushing systems designed to help label and trace digital media in response to growing concern about deepfakes and manipulated content. The broader annoyance is cultural as much as technical. Photos used to feel like imperfect evidence. Now they often feel like negotiable artifacts. AI did make editing more accessible, but it also made authenticity harder to assume, even in cases where no deception was intended.

Social Feeds That Filled Up With More Synthetic Clutter

Social media was already noisy before generative AI arrived. Since then, timelines have become even more crowded with low-cost content: mass-produced images, recycled text threads, fabricated quotes, synthetic commentary, and fake expert-style posts built to capture attention. Not all of it is malicious, but much of it adds to a sense that feeds are becoming harder to trust and harder to enjoy.

Regulators and media researchers have noticed the same pattern. Ofcom has found growing public concern about the prevalence of AI-generated content and increasing difficulty in determining what is real online. NewsGuard and others have separately tracked AI-driven misinformation and content farms. The day-to-day annoyance is easy to understand even without reading those reports. People log on for updates, entertainment, or connection and instead find themselves sorting through polished filler. AI did not invent online clutter. It simply made clutter cheaper, faster, and more scalable than it had ever been before.

Banking and Security Checks That Feel Smarter Until They Go Wrong

AI systems are increasingly used to flag suspicious transactions, detect fraud patterns, and automate customer verification. In principle, that should help people by catching problems early. In practice, it can create another kind of everyday irritation: legitimate activity gets flagged, accounts get locked, or transactions get delayed, and the path to explaining the mistake can feel opaque and slow.

This is the paradox of automated trust systems. They are designed to reduce risk at scale, but when they misfire, the individual user often faces a frustratingly impersonal appeals process. Concerns around AI-enhanced fraud only intensify that tension. Financial and communications regulators have been warning about deepfakes, impersonation, and scam content that exploit growing trust gaps. So the system becomes stricter while the environment becomes harder to read. The result is familiar to many consumers: AI helps institutions move faster against fraud, but it can leave ordinary people dealing with extra verification, unexplained holds, and too little room for common-sense exceptions.

Productivity Tools That Reduce Drudgery and Increase Throughput Anxiety

Perhaps the biggest irony is that AI has made “productivity” itself more annoying. It can draft, summarize, brainstorm, organize, and accelerate countless tasks. Yet in many workplaces, those benefits quickly become new expectations. If output is easier to produce, more output gets requested. If notes can be generated instantly, people expect immediate follow-up. If a first draft takes minutes instead of hours, the definition of “done” quietly expands.

That pattern shows up clearly in workplace research. AI adoption has surged, and companies increasingly view it as central to how knowledge work will be organized. But faster production does not automatically create calmer workdays. Often it raises the ceiling on how much can be demanded. Employees save time in one place only to see that time filled elsewhere. The annoyance is structural rather than personal: AI helps remove some drudgery, yet it can also intensify the pace of work. Instead of getting a lighter load, many people get a faster treadmill.

19 Things Canadians Don’t Realize the CRA Can See About Their Online Income

Earning money online feels simple and informal for many Canadians. Freelancing, selling products, and digital services often start as side projects. The problem appears at tax time. Many people underestimate how much information the CRA can access. Online platforms, banks, and payment processors create detailed records automatically. These records do not disappear once money hits an account. Small gaps in reporting add up quickly.

Here are 19 things Canadians don’t realize the CRA can see about their online income.